Microsoft Research has announced the release of Phi-2, a small language model (SLM) that demonstrates surprising capabilities for its size. The model, launched today, was first unveiled at Microsoft’s Ignite 2023 event, where Satya Nadella highlighted its ability to achieve state-of-the-art performance with just a fraction of the training data.

Unlike GPT, Gemini, and other large language models (LLMs), SLMs are trained on limited data sets that use fewer parameters and require less computation to run. As a result, it is a model that cannot generalize as well as large-scale language models, but in the case of Phi, can be very good and efficient for certain tasks, such as mathematics and computation.

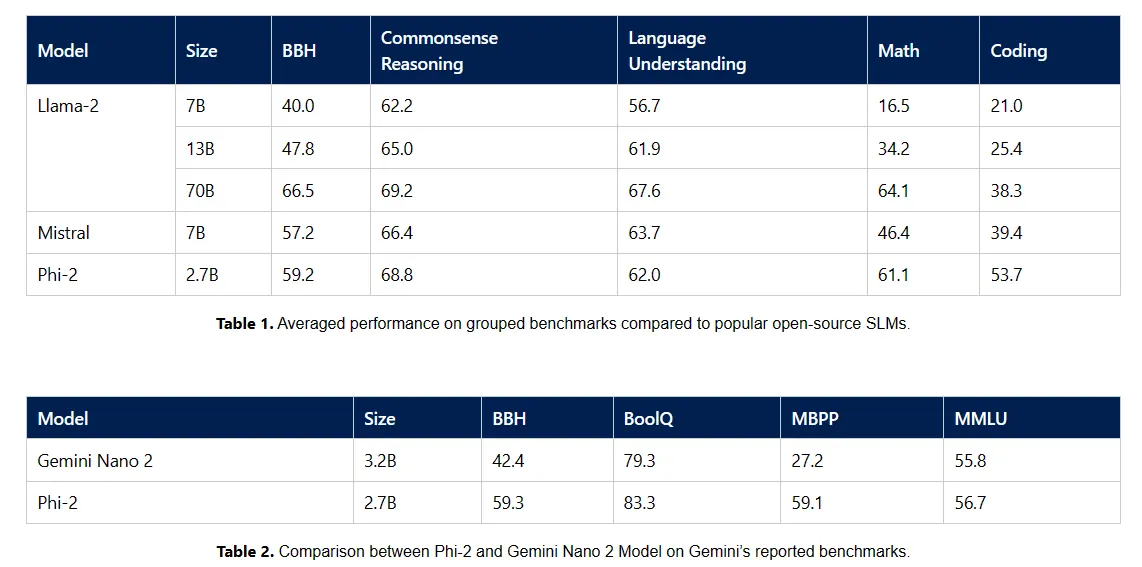

According to Microsoft, with 2.7 billion parameters, Phi-2 demonstrates superior inference and language understanding comparable to models up to 25 times its size. This comes from Microsoft Research’s focus on high-quality training data and advanced scaling techniques to create models that outperform previous models on a variety of benchmarks, including math, coding, and common sense reasoning.

“With just 2.7 billion parameters, Phi-2 outperforms the Mistral and Llama-2 models at 7B and 13B parameters across a variety of aggregated benchmarks,” Microsoft said. A low blow to the latest AI models. 2 matches or exceeds the performance of the recently announced Google Gemini Nano 2, despite its smaller size.”

Gemini Nano 2 is Google’s latest investment in a multi-mode LLM that can be run locally. It was announced as part of the Gemini LLM family, which is expected to replace PaLM-2 in most Google services.

But Microsoft’s approach to AI goes beyond model development. Launch of custom chips Maia and Cobalt decryption, showing that the company is moving toward fully integrating AI and cloud computing. The computer chips optimized for AI tasks support Microsoft’s larger vision of blending hardware and software capabilities and are in direct competition with Google Tensor and Apple’s new M-series chips.

It is important to remember that Phi-2 is a language model small enough to run locally on low-tier devices, even smartphones. This lays the foundation for new applications and use cases.

As Phi-2 enters the realm of AI research and development, its availability in the Azure AI Studio model catalog is also a step toward democratizing AI research. Microsoft is one of the most active contributors to open source AI development.

As the AI landscape continues to evolve, Microsoft’s Phi-2 is proof that the AI world isn’t always about thinking bigger. Sometimes the greatest power lies in being smaller and smarter.

Edited by Ryan Ozawa.